On July 1, Max Wang, a Boston-based software engineer who was leaving Facebook after more than seven years, shared a video on the company’s internal discussion board that was meant to serve as a warning.

“I think Facebook is hurting people at scale,” he wrote in a note accompanying the video. “If you think so too, maybe give this a watch.”

Most employees on their way out of the “Mark Zuckerberg production” typically post photos of their company badges along with farewell notes thanking their colleagues. Wang opted for a clip of himself speaking directly to the camera. What followed was a 24-minute clear-eyed hammering of Facebook’s leadership and decision-making over the previous year.

The video was a distillation of months of internal strife, protest, and departures that followed the company’s decision to leave untouched a post from President Donald Trump that seemingly called for violence against people protesting the police killing of George Floyd. And while Wang’s message wasn’t necessarily unique, his assessment of the company’s ongoing failure to protect its users — an evaluation informed by his lengthy tenure at the company — provided one of the most stunningly pointed rebukes of Facebook to date.

“We are failing,” he said, criticizing Facebook’s leaders for catering to political concerns at the expense of real-world harm. “And what's worse, we have enshrined that failure in our policies.”

Max Wang, an engineer who worked at Facebook for seven years, posted an internal video message on July 1 as he prepared to leave the company, arguing that "we are failing" and that "we have enshrined that failure in our policies." BuzzFeed News obtained audio of that message, which has been edited to remove a portion in which Wang thanks former colleagues but is otherwise left intact.

While external criticisms of Facebook, which has roughly 3 billion users across its various social platforms, have persisted since the run-up to the 2016 presidential election, they’ve rarely sparked wide-scale dissent inside the social media giant. As it weathered one scandal after another — Russian election interference, Cambridge Analytica, Rohingya genocide in Myanmar — over the past three and a half years, Facebook’s stock price rose and it continued to recruit and retain top talent. In spite of the occasional internal dustup, employees generally felt the company was doing more good than harm. At the very least, they avoided publicly airing their grievances.

“We are failing, and what’s worse, we have enshrined that failure in our policies.”

“This time, our response feels different,” wrote Facebook engineer Dan Abramov in a June 26 post on Workplace, the company’s internal communications platform. “I’ve taken some [paid time off] to refocus, but I can’t shake the feeling that the company leadership has betrayed the trust my colleagues and I have placed in them.”

Messages like those from Wang and Abramov illustrate how Facebook’s handling of the president’s often divisive posts has caused a sea change in its ranks and led to a crisis of confidence in leadership, according to interviews with current and former employees and dozens of documents obtained by BuzzFeed News. The documents — which include company discussion threads, employee survey results, and recordings of Zuckerberg — reveal that the company was slow to take down ads with white nationalist and Nazi content reported by its own employees. They demonstrate how the company’s public declarations about supporting racial justice causes are at odds with policies forbidding Facebookers from using company resources to support political matters. They show Zuckerberg being publicly accused of misleading his employees. Above all, they portray a fracturing company culture.

Frustrated and angry, employees are now challenging Zuckerberg and leadership at companywide meetings, staging virtual walkouts, and questioning if their work is making the world a better place. The turmoil has reached a point where Facebook's CEO recently threatened to fire employees who “bully” their colleagues.

As it heads into a US presidential election where its every move will be dissected and analyzed, the social network is facing unprecedented internal dissent as employees worry that the company is wittingly or unwittingly exerting political influence on content decisions related to Trump, and fear that Facebook is undermining democracy.

“Come November, a portion of Facebook users will not trust the outcome of the election because they have been bombarded with messages on Facebook preparing them to not trust it.”

Yaël Eisenstat, Facebook's former election ads integrity lead, said the employees’ concerns reflect her experience at the company, which she believes is on a dangerous path heading into the election.

“All of these steps are leading up to a situation where, come November, a portion of Facebook users will not trust the outcome of the election because they have been bombarded with messages on Facebook preparing them to not trust it,” she told BuzzFeed News.

She said the company’s policy team in Washington, DC, led by Joel Kaplan, sought to unduly influence decisions made by her team, and the company’s recent failure to take appropriate action on posts from President Trump shows employees are right to be upset and concerned.

“These were very clear examples that didn't just upset me, they upset Facebook’s employees, they upset the entire civil rights community, they upset Facebook’s advertisers. If you still refuse to listen to all those voices, then you're proving that your decision-making is being guided by some other voice,” she said.

Do you work at Facebook or another technology company? We'd love to hear from you. Reach out at ryan.mac@buzzfeed.com or via one of our tip line channels.

In a broad statement responding to a list of questions for this story, a Facebook spokesperson said the company has a rigorous policy process and is transparent with employees about how decisions are made.

“Content decisions at Facebook are made based on our best, most even, application of the public policies as written. It will always be the case that groups of people, even employees, see these decisions as inconsistent; that’s the nature of applying policies broadly,” the spokesperson said. “That’s why we’ve implemented a rigorous process of both consulting with outside experts when adopting new policies as well as soliciting feedback from employees and why we’ve created an independent oversight board to appeal content policy decisions on Facebook.”

In his note, Abramov, who’s worked at the social network for four years, compared Facebook to a nuclear power plant. Facebook, unlike traditional media sources, can generate “social energy” at a scale never seen before, he said.

“But even getting small details wrong can lead to disastrous consequences,” he wrote. “Social media has enough power to damage the fabric of our society. If you think that’s an overstatement, you aren’t paying attention.”

“I Know Doing Nothing Is Not Acceptable”

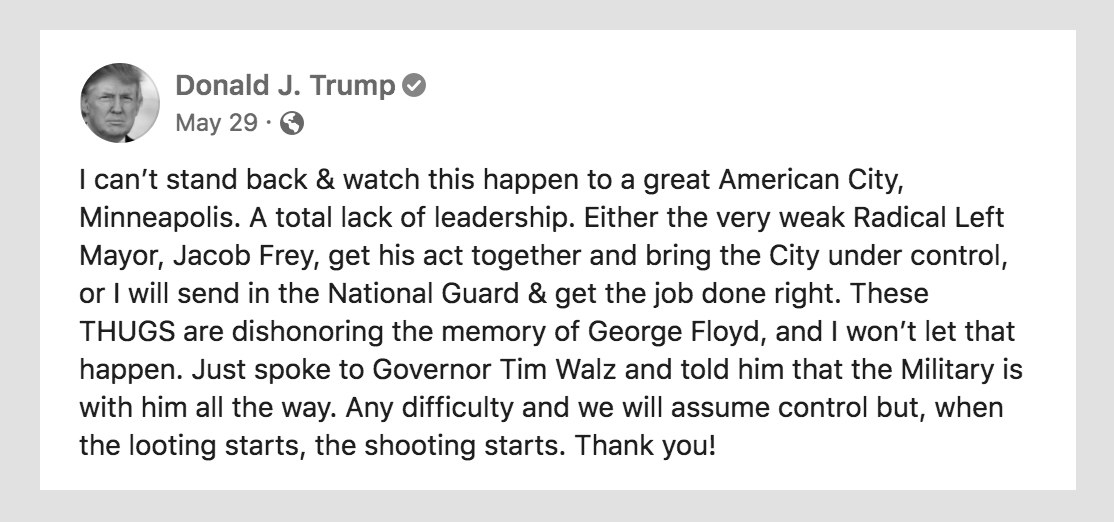

On May 28, as protests against police brutality raged in Minneapolis and around the country, President Donald Trump posted identical messages to his Facebook and Twitter accounts, which have a collective 114 million followers.

“Just spoke to Governor Tim Walz and told him that the Military is with him all the way,” the president wrote that night. “Any difficulty and we will assume control but, when the looting starts, the shooting starts.”

Within a matter of hours, Twitter placed Trump’s post behind a warning label, noting it violated its rules around glorifying violence. Meanwhile, Facebook did nothing. It had decided that the phrase “when the looting starts, the shooting starts” — which has historical ties to racially oppressive police violence — did not constitute a violation of its terms of service.

In explaining the decision the next day, Zuckerberg said that while he had a “visceral negative reaction” to the post, Facebook policies allowed for “discussion around the state use of force.” Moreover, he argued that, in spite of the phrase’s historical context, it was possible that it could have been interpreted to mean the president was simply warning that looting could lead to violence. (Axios later reported that Zuckerberg had personally called Trump the day after the post.)

Employees, already angered by the company’s failure to take action against a post from Trump earlier that May containing mail-in ballot misinformation, revolted. In a Workplace group called “Let’s Fix Facebook (the company),” which has about 10,000 members, an employee started a poll asking colleagues whether they agreed “with our leadership’s decisions this week regarding voting misinformation and posts that may be considered to be inciting violence.” About 1,000 respondents said the company had made the wrong decision on both posts, more than 20 times the number of responses for the third-highest answer, “I’m not sure.”

“There isn't a neutral position on racism.”

“I don't know what to do, but I know doing nothing is not acceptable,” Jason Stirman, a Facebook design manager at Facebook, wrote on Twitter that weekend, one of a lengthy stream of dissenting voices. “I'm a FB employee that completely disagrees with Mark's decision to do nothing about Trump's recent posts, which clearly incite violence. I'm not alone inside of FB. There isn't a neutral position on racism.”

The following Monday, hundreds of employees — most working remotely due to the company’s coronavirus policies — changed their Workplace avatars to a white and black fist and called out sick in a digital walkout.

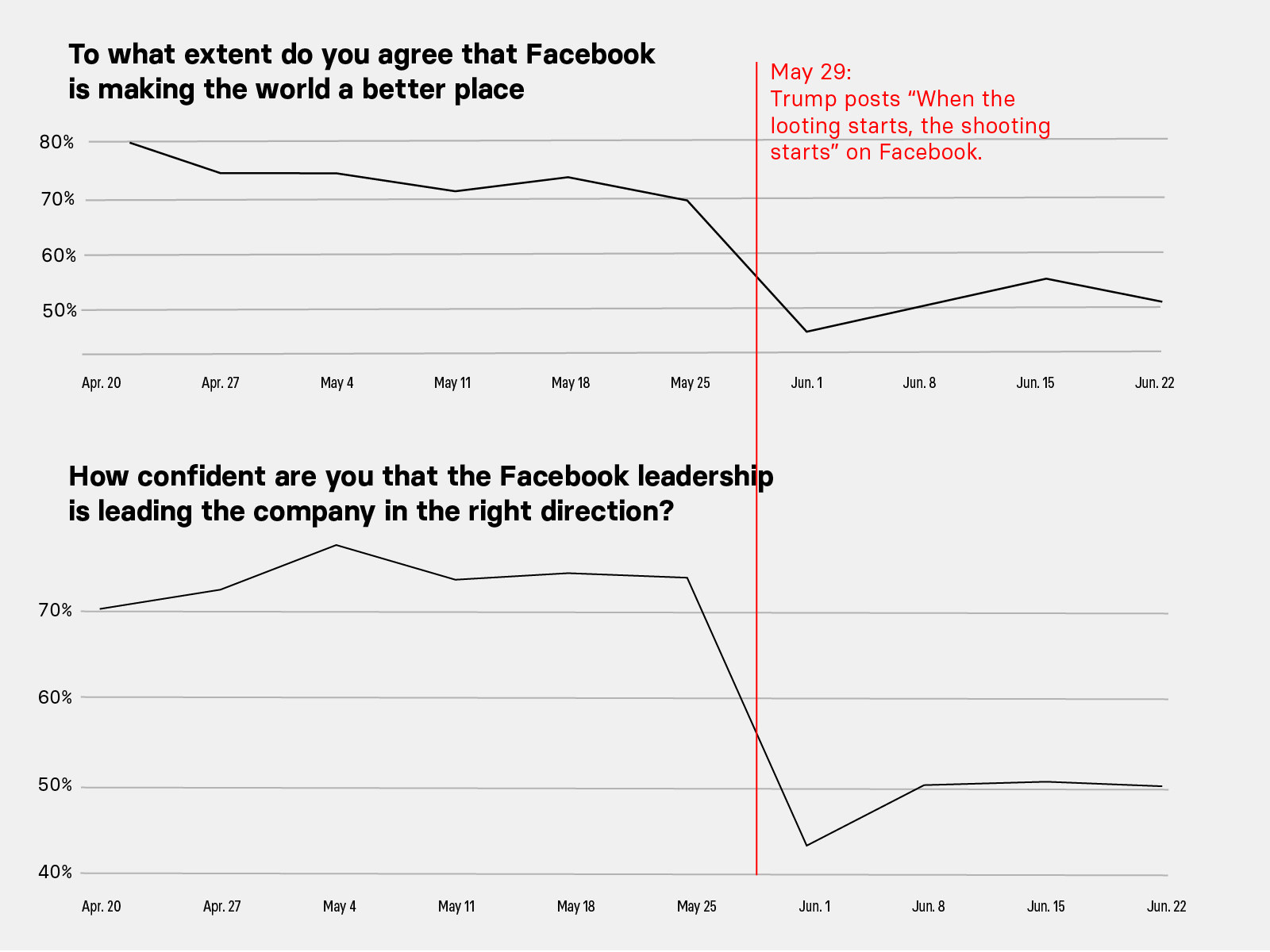

As Facebook grappled with yet another public relations crisis, employee morale plunged. Worker satisfaction metrics — measured by “MicroPulse” surveys that are taken by hundreds of employees every week — fell sharply after the ruling on Trump’s "looting" post, according to data obtained by BuzzFeed News.

On June 1, the day of the walkout, about 45% of employees said they agreed with the statement that Facebook was making the world better — down about 25 percentage points from the week before. That same day, Facebook’s internal surveys showed that around 44% of employees were confident in “Facebook leadership leading the company in the right direction” — a 30 percentage point drop from May 25. Responses to that question have stayed around that lower mark as of earlier this month, according to data seen by BuzzFeed News.

Culturally, Facebook has become increasingly divided, with some company loyalists pushing the idea that a silent majority supported the call made on the Trump post.

On Blind, a forum app that requires work emails for people to anonymously discuss their employer, some unnamed employees demanded that those involved in the walkout be fired. One thread focused specifically on Jason Toff, a director of product management at Facebook, who had tweeted following the Trump decision that he was “not proud of how we’re showing up.”

“His major responsibility is to acquire top talent and motivate his team, and that’s impossible to do when you publicly share that you aren’t proud of where you work at,” wrote one Blind user on a thread titled “Fire Jason Toff.”

“If you’re bullying your fellow colleagues into taking a position on something, then we will fire you.”

Other anonymous Facebook employees vilified May Zhou, a software engineer who had asked at an all-hands meeting how many Black employees had been involved in the Trump "looting" post decision. They called her "disrespectful" and a “sjw” — pejorative shorthand for “social justice warrior.” (In response to Zhou’s question, Zuckerberg said only one Black employee in the company’s Austin office had been consulted and that Facebook’s political speech policies needed tweaking.)

Toff declined to speak for this story. Zhou did not return a request for comment.

This ongoing contention and erosion of Facebook’s culture has infuriated Zuckerberg. In a June 11 live Q&A with employees, he pointedly addressed it.

“I've been very worried about … the level of disrespect and, in some cases, of vitriol that a lot of people in our internal community are directing towards each other as part of these debates,” he said. “If you're bullying your fellow colleagues into taking a position on something, then we will fire you.”

“Our Community Standards Are Fundamentally Broken”

Facebook employees who spoke to BuzzFeed News pointed to the company’s lack of consistency and poor communication around enforcement of its community standards as a key frustration. In late May, following a decision by Twitter to place a misleading post about mail-in ballots from Trump behind a warning label, Zuckerberg appeared on Fox News to chastise his competitor for trying to be an “arbiter of truth.”

“I think in general, private companies probably shouldn’t be — or especially these platform companies — shouldn’t be in the position of doing that,” he said in the interview. Trump had made the same false claim on Facebook, which took no action against it.

Later that week, when Trump made his “looting” statement and Twitter moderated it and Facebook did not, Zuckerberg — the “ultimate decision-maker” according to Facebook’s head of communications — defended his position. “Unlike Twitter, we do not have a policy of putting a warning in front of posts that may incite violence because we believe that if a post incites violence, it should be removed regardless of whether it is newsworthy, even if it comes from a politician,” he wrote in a post on May 29.

It took just four days for Zuckerberg to change his mind. In comments at a companywide meeting on June 2 that were first reported by Recode, Facebook’s founder said the company was considering adding labels to posts from world leaders that incite violence. He followed that up with a Facebook post three days later, in which he declared “Black lives matter,” and made promises that the company would review policies on content discussing “excessive use of police or state force.”

“Are you at all willing to be wrong here?”

“What material effect does any of this have?” one employee later asked on Facebook’s Workplace, openly challenging their CEO. “Commitments to review offer nothing material. Has anything changed for you in a meaningful way? Are you at all willing to be wrong here?”

On June 26, nearly a month later, Zuckerberg posted a clarification to his remarks, noting that any post that is determined to be inciting violence will be taken down.

The company’s June 18 decision to remove a Trump campaign ad that featured a triangle symbol used by Nazis to identify political prisoners didn’t make things any better. Speaking at an all-hands meeting hours after the ads were taken down, Zuckerberg said the action was an easy call and evidence of Facebook’s commitment to applying its policies equally.

“This decision was not a particularly close call from my perspective,” he said. “Our position on all this stuff is we want to allow as wide open an aperture of free expression as possible … but if something is over the line, no matter who it is, we will take it down.”

Documents reviewed by BuzzFeed News, however, reveal Facebook's decision to remove the Trump ads was not as simple as Zuckerberg claimed. In fact, the company failed to act on them until after it faced outside pressure, and despite internal alerts from its own employees.

On the morning before the ads were removed, a heated discussion took place among Facebook employees after at least nine different people said they had reported the content but were told it did not violate company policy.

“I reported it and called it out as both possible hate speech and threat of violence. This is apparently not a violation of our CS because our CS are fundamentally broken,” Natalie Troxel, a user experience employee wrote on Workplace, referencing the company’s community standards.

Kaitlin Sullivan, whose LinkedIn profile says she leads “the Americas branch of Facebook’s content policy team,” replied, saying the ad was still being evaluated and that “the triangle without any more context doesn’t clearly violate the letter there.” It wasn’t “helpful” to describe the company’s process as broken, she added.

“The fact that this has even remained on our platform for this long is troubling.”

“I stand by what I said ... A world leader is promoting content on our platform that uses explicit Nazi imagery,” Troxel responded.

“The fact that this has even remained on our platform for this long is troubling,” another employee added.

Troxel and Sullivan did not respond to requests for comment for this story.

A Facebook employee who spoke to BuzzFeed News anonymously for fear of retaliation said they were “flabbergasted” the ads weren't immediately ruled violative. “There’s a real culture within Facebook to assume good intent,” this person said. “To me, this was a case where you cannot assume good intent for a symbol that could be Nazi imagery.” They also were bothered that Facebook took action on the ad only after receiving questions from the Washington Post — more than 12 hours after it had been flagged by employees.

“It certainly looks like a decision would not have been made the way it was had there not been media pressure and a larger than normal involvement of Facebook employees on those posts and threads on Workplace,” the anonymous employee said.

The incident was yet another example of Facebook declining to act on violative content only to change its mind following public criticism. And once again, it raised questions about how decisions are made, and whether policies are applied consistently or in reaction to negative publicity or political concerns.

Eisenstat, the former Facebook election ads integrity lead, said the company’s failure to act quickly on the Trump ads, and to moderate his “looting” post, are further evidence that political considerations and outside pressure are influencing policy decisions.

“They put political considerations over enforcing their policies to the letter of the law,” she said. “I can say for my time there that more than once the [Washington] policy team weighed in on appeals and decisions that made it clear there was a political consideration factoring into how we were enforcing our policy.”

“They put political considerations over enforcing their policies to the letter of the law.”

A related scenario played out earlier this month over an ad run by a white nationalist Facebook page, “White Wellbeing Australia.” “White people make up just 8% of the world's population so if you flood all white majority countries with nonwhites you eliminate white children forever,” the ad proclaimed.

Facebook removed the ad and prevented the page from running paid content in the future only after being contacted by BuzzFeed News on Wednesday, July 8. But that move too came after a company employee flagged the ad and was told it wasn’t violative.

“I reported the page and the post and was told that it doesn’t meet the threshold for a content violation,” Facebook project manager Matthew Brennan wrote on Workplace after the publication of BuzzFeed News’ story. “There is no doubt this is a white supremacy group and the post in question is not trying to hide that fact.”

Brennan, who saw the ad appear in his News Feed, said friends and family in Australia raised concerns about the ad over the weekend, leaving him frustrated that “there was nothing that could be done about it.”

“It wasn’t exactly filling me with pride to work at Facebook,” Brennan concluded.

“I get the same from my wife, friends, and family,” another employee responded. “These decisions are going to be on the wrong side of history.”

Brennan did not respond to a request for comment.

In the end, Facebook removed the ad and prevented the white nationalist page from running future ads. The page, however, continues to exist on the social network.

“Diversity Is A Huge Problem”

In his farewell video, Wang accused Zuckerberg of "gaslighting" employees and a “bait and switch” during an early June meeting in which the Facebook CEO explained the decision on the Trump “looting” post. Why was Zuckerberg only talking about whether Trump’s comments fit the company’s rules, and not about fixing policies that allowed for threats that could hurt people in the first place, he asked.

“Watching this just felt like someone was sort of slowly swapping out the rug from under my feet,” Wang said. “They were swapping concerns about morals or justice or norms with this concern about consistency and logic, as if it were obviously the case that ‘consistency’ is what mattered most.”

What the departing engineer said echoed what civil rights groups such as Color of Change have been saying since at least 2015: Facebook is more concerned with appearing unbiased than making internal adjustments or correcting policies that permit or enable real-world harm.

“Watching this just felt like someone was sort of slowly swapping out the rug from under my feet.”

In a June 19 companywide meeting, for example, Zuckerberg turned a question about the decision-making influence of Joel Kaplan, Facebook’s vice president of global policy and a former member of President George W. Bush’s administration, into a discussion about the need for ideological diversity. Kaplan, already a controversial figure within Facebook because of his public support for Supreme Court Justice Brett Kavanaugh during his heated 2018 confirmation hearings, has drawn ire from external and internal critics alike, who say that his moves to placate conservative Facebook power users like Trump are driven by politics and not any dedication to policy.

Eisenstat told BuzzFeed News that a member of Kaplan's Washington policy team had attempted to influence ad enforcement decisions being considered by her team, which she considered highly inappropriate. In one example, her team was evaluating whether to remove an ad placed by a conservative organization.

“But then a policy person chimed in and gave the both-sides argument. They actually wrote something like, ‘There's bad behavior on both sides.’ And I remember thinking, What does that have to do with anything?” she said.

“When you have the policy folks weighing in heavily on how you are enforcing that, to me, is where it makes it crystal clear this is not a strict letter-of-the-law enforcement. Because if it was, then policy should never intervene,” Eisenstat added.

"They actually wrote something like, ‘There's bad behavior on both sides.’ And I remember thinking, What does that have to do with anything?"

Instead of answering the question about Kaplan’s influence at the meeting, Zuckerberg argued that his vice president brought an important conservative viewpoint to the table. “The population out there in the community we serve tends to be on average ideologically a little bit more conservative than our employee base,” Zuckerberg said. “Maybe ‘a little’ is an understatement.”

For Zuckerberg, Kaplan’s Republican leanings contributed to “a good diversity of views” within the company. But for critics, the idea that moderating calls for violence or hate speech or Nazi imagery is little more than wrangling a political disagreement is disingenuous and prevents the company from taking a stand on civil rights.

“He uses ‘diverse perspective’ as essentially a cover for right-wing thinking when the real problem is dangerous ideologies,” Brandi Collins-Dexter, a senior campaign director at Color of Change, told BuzzFeed News after reading excerpts of Zuckerberg’s comments. “If you are conflating conservatives with white nationalists, that seems like a far deeper problem because that’s what we’re talking about. We’re talking about hate groups and really specific dangerous ideologies and behavior.”

She said Zuckerberg’s assertion that the team making key content and policy decisions is diverse was “a huge problem.”

“The fact that he would look at the people around him on the board and in decision-making positions and pat himself on the back saying it has diversity is a huge problem right there,” Collins-Dexter added, referencing the company’s overwhelmingly white C-suite.

Facebook’s recently completed civil rights audit reiterated concerns that the company’s leadership and decision-making process are falling short. “Many in the civil rights community have become disheartened, frustrated and angry after years of engagement where they implored the company to do more to advance equality and fight discrimination, while also safeguarding free expression,” the auditors wrote after their two-year assessment.

“If you are conflating conservatives with white nationalists, that seems like a far deeper problem.”

In light of that report, Facebook has promised change. The company added diversity and inclusion responsibilities to biannual performance reviews and elevated its chief diversity officer to report to Sheryl Sandberg, Facebook’s chief operating officer. In mid-June, Sandberg said the company would commit $200 million to Black-owned businesses and organizations on top of a $10 million commitment to racial justice organizations made earlier that month.

Even that decision was not easy, according to internal communications. Asked during a June 11 all-hands meeting if Facebook would introduce a donation-matching program presumably for Black justice organizations like many of the company’s Silicon Valley peers, Zuckerberg asked for employees to be realistic because they were in “the middle of a global recession.”

“Our revenue is significantly less than we expected it to be,” he said, a week before Sandberg announced the $200 million commitment.

Employees who have tried to use company resources on racial justice causes have also been frustrated. Every month, each of the company’s full-time employees gets $250 in ad credits to be used on the platform. Some employees, however, found that they were unable to use those ads to help boost civil rights groups that had drawn attention during the police brutality protests that swept the nation earlier this summer.

An internal document provided to BuzzFeed News noted that employee credits may not be used for ads “related to politics or issues of national importance.” For employees in the US, that means issues relating to “civil and social rights,” “environmental politics,” “guns,” “health,” and several other defined categories are off-limits.

“It’s infuriating,” a current employee told BuzzFeed News.

Good Politics, Bad Leadership

Outside calls for Facebook to change its policies — like the Stop Hate For Profit ad boycott that now includes Coca-Cola, Starbucks, and Verizon — have further ratcheted up internal tensions.

“Two years ago, I wouldn’t have had conversations with my colleagues where I would be supporting the advertising boycott,” one employee told BuzzFeed News. “But we are having those conversations now.”

This is largely unprecedented for Facebook, a company unaccustomed to widespread internal dissent. But Wang’s departure video, the virtual walkout, and the recent drop in employee satisfaction show Zuckerberg’s approach and fumbling explanations and reversals are beginning to take an internal toll.

“I think Facebook is getting trapped by our ideology of free expression, and the easy temptation of just trying to stay consistent with an ideology,” Wang said in his video.

Wang’s departure thread kicked off a discussion, attracting comments from people like Yann LeCun, Facebook’s head of artificial intelligence. After thanking Wang for his thoughts, the executive pointed employees to his own June 2 post in which he expressed concern that Facebook’s policy and content decisions are not geared toward protecting democracy.

“I would submit that a better underlying principle to content policy is the promotion and defense of liberal democracy.”

“American Democracy is threatened and closer to collapse than most people realize,” LeCun wrote on June 2. “I would submit that a better underlying principle to content policy is the promotion and defense of liberal democracy.”

LeCun did not respond to requests for comment.

Other employees, like Abramov, the engineer, have seized the moment to argue that Facebook has never been neutral, despite leadership’s repeated attempts to convince employees otherwise, and as such needed to make decisions to limit harm. Facebook has proactively taken down nudity, hate speech, and extremist content, while also encouraging people to participate in elections — an act that favors democracy, he wrote.

“As employees, we can’t entertain this illusion,” he said in his June 26 memo titled “Facebook Is Not Neutral.” “There is nothing neutral about connecting people together. It’s literally the opposite of the status quo.”

Zuckerberg seems to disagree. On June 5, he wrote that Facebook errs on the “side of free expression” and made a series of promises that his company would push for racial justice and fight for voter engagement.

The sentiment, while encouraging, arrived unaccompanied by any concrete plans. On Facebook’s internal discussion board, the replies rolled in.

“There is exactly one impressive thing about this post: by apparently committing to nothing at all but acting like you have, you’ve managed to placate some of us,” wrote one employee. “It’s good politics. It’s bad leadership.” ●