Nearly 3,000 miles away from Facebook’s Menlo Park headquarters, in an old, beige office building in downtown Manhattan, a group of company employees is working on projects that seem better suited for science fiction than social networking. The team, Facebook Artificial Intelligence Research — known internally as FAIR — is focused on a singular goal: to create computers with intelligence on par with humans. While still far from its finish line, the group is making the sort of progress few believed possible at the turn of the decade. Its AI programs are drawing pictures almost indistinguishable from those by human artists and taking quizzes on subject matter culled from Wikipedia. They’re playing advanced video games like Starcraft. Slowly, they’re getting smarter. And someday, they could change Facebook from something that facilitates interaction between friends into something that could be your friend.

For these reasons and others, FAIR isn’t your typical Facebook team. Its members do not work directly on the $410 billion company’s collection of mega popular products: Instagram, WhatsApp, Messenger, and Facebook proper. Its ultimate goal is likely decades off, and may never be reached. And it’s led not by your typical polished Silicon Valley overachiever but by Yann LeCun, a 56-year-old academic who’s experienced real failure in his life and managed to come back. His once-rejected theories about artificial intelligence are today considered world-class, and his vindication is Facebook’s bounty.

“Your interaction with the digital world, your phone, your computer, is going to be transformed,” LeCun told BuzzFeed News of what may be.

FAIR is improving computers’ ability to see, hear, and communicate on their own, and its findings now permeate Facebook’s products, touching everything from the News Feed ranking to cameras and photo filters. And Facebook is investing, big time — not simply because artificial intelligence is interesting, but because it’s necessary. In all corners of tech today, companies are competing on the basis of their AI. Uber’s AI-powered autonomous cars are core to its ride-hailing strategy. Google’s AI-reliant Google Home smart speaker is answering queries users once typed in the search bar (and, long before that, looked up in the encyclopedia). Amazon is building convenience stores with artificially intelligent cashiers in an effort to crack the $674 billion edible grocery market.

And at Facebook, AI is everywhere. Its AI-powered photo filters, for instance, are helping it fend off a challenge from Snapchat. Its AI’s ability to look at pictures, see what’s inside them, and decide what to show you in its feeds is helping the company provide a compelling experience that keeps you coming back. And similar technology is monitoring harassing, terroristic, and pornographic content and flagging it for removal.

“The experiences people have on the whole family of Facebook products depend critically on AI,” said Joaquin Candela, the head of Facebook’s Applied Machine Learning group, or AML, which puts research into action on the platform itself. “Today, Facebook could not exist without AI. Period.”

As the field becomes more advanced, Facebook will rely on LeCun and his team to help it stay ahead of competitors, new or current, who are likely to embrace the science.

After years of criticism and marginalization, LeCun finally has it all: 80 researchers, the backing of Facebook’s vast financial resources, and mainstream faith in his work. All he has to do now is deliver.

Sight

From an early age, LeCun believed he could get computers to see. Facial recognition and image detection may be standard today, but when LeCun was a university student in Paris in the early 1980s, computers were effectively blind, unable to make sense of anything within images or to figure out what was appearing inside their cameras’ lenses. It was in college that LeCun came across an approach to the field that had remained largely unexplored since the 1960s, but that he thought could potentially “allow machines to learn many tasks, including perception.”

The approach, called an artificial neural network, takes systems of small, interconnected sensors and has them break down content like images into tiny parts, then identify patterns and decide what they're seeing based on their collective inputs. After reading the arguments against neural nets — namely that they were hard to train and not particularly powerful— LeCun decided to press ahead anyway, pursuing a PhD where he’d focus on them despite the doubts. “I just didn't believe it,” he said of the criticism.

Hard times in the artificial intelligence field occur with such frequency and intensity that they have their own special name: AI Winter. These periods come about largely when researchers’ results don’t live up to their boasts, making it seem like the science doesn’t work, causing funding and interest to dry up as a result, and technological progress along with it.

LeCun has seen his fair share of AI Winter. After settling into an AI research job at Bell Labs in the mid ‘90s, internal strife at AT&T caused his team there to come apart just as it was rolling out check-reading ATMs — neural-net-powered technology that’s still in use today — right as LeCun believed it was making clear progress. “The whole project was disbanded essentially on the day that it was becoming really successful,” LeCun said. “This was really depressing.”

At the same time, other methods were gaining favor with mainstream researchers. These methods would later fall back out of favor, but their rise was enough to push neural nets — and LeCun, their longtime champion — to the margins of the field. In the early 2000s, other academics wouldn’t even allow him to present his papers at their conferences. “The computer-vision community basically rejected him,” Geoff Hinton, a neural net pioneer who’s currently an engineering fellow at Google and a professor at the University of Toronto, told BuzzFeed News. “The view was that he was carrying on doing things that had been promising in the ‘80s but he should have got over it by now,” Hinton explained.

“That’s not the view anymore,” he added.

Other neural net researchers encountered similar problems at the time. Yoshua Bengio, a professor at the University of Montreal and head of Montreal Institute for Learning Algorithms, had a hard time finding grad students willing to work with him. “I had to twist the arms of my students to work in this area because they were scared of not having a job when they would finish their PhD,” he told BuzzFeed News.

In 2003, LeCun laid the foundation for his redemption. That year, he joined New York University’s faculty and also got together with Hinton and Bengio in a largely informal coalition to revive neural nets. “We started what I've been calling the Deep Learning Conspiracy,” LeCun said with a smile.

“The computer vision community basically rejected him.”

The Deep Learning Conspiracy played a critical role in the field, mostly by virtue of sticking to its belief that of instead building individual, specialized neural nets for each type of object you wanted to detect, you could use the same template to build one neural that could detect image, video, and speech. So instead of building one neural net to detect penguins and another for cats, you could build a single neural net that could detect both, and tell the difference. These new neural nets could also be modified for other tasks, such as looking at audio waves to detect the patterns of speech.

The Conspiracy’s research was buoyed by two important outside factors: Increases in computing power, which helped its neural nets work fast enough to be practical, and an exponential increase in available data (pictures, text, etc.) created thanks to the widespread adoption of the internet, which could be churned through the networks to make them smarter. The result eventually became a nimble, fast, accurate approach that opened up new possibilities for the field.

With the fundamentals set in place by LeCun and his compatriots, computer vision exploded in the early 2010s. Computers began recognizing objects in images, then in videos, and then live in the camera. Now, you can point a camera at a basketball and AI can understand what it’s looking at. LeCun quickly went from guy on the sidelines to a leader in the field. “It went from nobody working on it to everybody working on it within a year,” LeCun said. “It's just insane — it's completely insane.”

In December 2013, LeCun joined Facebook, an ideal environment for someone interested in applying AI research to photos. Facebook’s platform is packed with billions of images, giving LeCun and his researchers a big broad canvas to implement new ideas. FAIR regularly collaborates with AML, to put its research into action on Facebook proper. The two groups build new systems that make the advances available across the company. AML is using FAIR’s research to help determine what content is shown to you in the News Feed, to translate content inside Facebook; it's also deploying it inside Facebook’s camera to create special effects that react to your motion.

Thought

Teaching computers to see is an elemental step toward teaching them how the world works. Humans understand how the world operates because we watch scenarios repeated over and over, and develop an understanding of how they’ll play out. When a car comes speeding down a road we’re standing in, for instance, we predict it might hit us, so we get out of the way. When it gets dark, we predict flipping a light switch will make it light again, so we flip it.

FAIR is trying to teach computers to predict outcomes, just like humans do, using a similar method. The team, LeCun explained, is showing its AI lots of related videos, then pausing them at a certain point, and asking the machine to predict what happens next. If you repeatedly show an AI system videos of water bottles being turned over people's heads, for instance, it could potentially predict the action will get someone wet.

“The essence of intelligence, to some extent, is the ability to predict,” LeCun explained. “If you can predict what's going to happen as a consequence of your actions then you can plan. You can plan a sequence of actions that will reach a particular goal.”

Teaching artificial intelligence to predict is one of the most vexing challenges in the field today, largely because there are many situations in which multiple possible outcomes are theoretically correct.

“The essence of intelligence, to some extent, is the ability to predict.”

Imagine, LeCun said, holding a pen vertically above a table and letting go. If you ask a computer where the pen will be in one second, there is no correct answer — the machine knows the pen will fall, but it can’t know exactly where it will land. So you need to tell the system that there are multiple correct answers “and that what actually occurs is but one representative of a whole set of alternatives. That’s the problem of learning to predict under uncertainty.”

Helping AI understand and embrace uncertainty is part of an AI discipline called “unsupervised learning,” currently the field’s cutting edge. When AI has observed enough to know how the world works and predict what’s going to happen next, it can start thinking a bit more like humans, gaining a kind of common sense, which, LeCun believes, is key to making machines more intelligent.

LeCun and his researchers allow that it will most likely take years before AI will fully appreciate the gray areas, but they’re confident they’ll get it there. “It’ll happen,” said Larry Zitnick, a research manager who works under LeCun. “But I would say that’s more of the 10-year horizon-ish.”

Speech

Back in December, Mark Zuckerberg published a splashy video demoing his “AI butler,” Jarvis. Coded by the Facebook founder himself, Jarvis made Zuckerberg toast, allowed his parents into his house after recognizing their faces, and even taught his baby daughter, Max, a lesson in Mandarin.

Jarvis was cool. But to LeCun, it was nothing special. “It’s mostly scripted, and it’s relatively simple, and the intelligence is kind of shallow, in a way,” LeCun said. His sights are set higher.

Jarvis was cool. But to LeCun, it was nothing special.

LeCun wants to build assistants, but ones that really understand what you tell them. "Machines that can hold a conversation,” he said. “Machines that can plan ahead. Machines you don't get annoyed at because they are stupid.”

There’s no blueprint for that, but FAIR is working on what may be the building blocks. Giving AI a rudimentary understanding of the world and training it to predict what might happen within it is one component. So is teaching it to read and write, which FAIR is using neural nets for, too. To a computer, an image is an array of numbers — but a spoken sentence can also be represented as an array of numbers, as can text. Thus, people like LeCun can use neural networks architectures to identify the object in images, the words in spoken sentences, or the topics in text.

AI still can’t comprehend words the way it comprehends images, but LeCun already has a vision for what the ultimate Jarvis might look like. His ideal assistant will possess common sense and an ability to communicate with other assistants. If you want to go to a concert with a friend, for instance, you’d tell your assistants to coordinate, and they would compare your musical tastes, calendars, and available acts to suggest something that works.

“The machine has to come up with some sort of representation of the state of the world,” LeCun said, describing the challenge. “People can’t be in two places at the same time, people can’t go from New York to San Francisco in a certain number of hours, factor in the cost of traveling — there’s a lot of things you have to know to organize someone’s life.”

Facebook is currently experimenting with a simple version of these digital assistant called M, being run by its Messenger team and relying on some FAIR research. Facebook Messenger recently released “M suggestions,” where M chimes into conversations in moments it thinks it can help. When someone asks “Where are you?” for instance, M can pop into the conversation and give you the option to share your location with a tap. It’s likely the company will expand this functionality into more advanced uses.

M is one application of Facebook’s efforts use AI to understand meaning, but the company is considering other uses for the technology. It may even put it to work in an effort to break down barriers it was recently accused of helping put up.

Even before the 2016 election drew attention to polarization and fake news on Facebook, Y-Lan Boureau, a member of LeCun’s team, was working to use AI to create more constructive conversations on Facebook. Boureau, who studied neurology as well as AI, decided to pursue this project after spending a summer watching her friends fight on Facebook with little interest in hearing opposing views. “If we could better understand what drives people in terms of their state of mind,” Boureau explained, “and how opinions are formed and how they get ossified and crystallized, and how you can end up with two people being unable to talk to each other, this would be a very good thing.”

Boureau wants to create a world where we see as many opinions as we can handle — up until the point at which we start tuning them out. AI can help with this by mapping out patterns in text, understanding where something goes off the rails and potentially figure out a way to alter the conversation flow to stem the bad turn. “If we knew more about that learning process and how these beliefs get in people’s heads from the data then it might be easier to understand how to get more constructive conversations in general,” Boureau said.

In the aftermath of the 2016 election, LeCun publicly suggested Facebook had the technical capabilities to use AI to filter out fake news. Some saw his statement as a solution to a problem many blamed for the widespread polarization in the US, but LeCun said that the task was best left to third parties, instead of machines capable of introducing bias. “There’s a role AI can play there, but it’s a very difficult product design issue rather than a technological issue,” he said. “You don’t want to lead people into particular opinions. You kind of want to be neutral in that respect.”

Reality

Hype cycles can be dangerous for the AI field, as LeCun knows well. And today, it certainly seems like we’re in one. In the first quarter of 2013, six companies mentioned AI on earnings calls. In the first quarter of 2017, 244 did, according to Bloomberg.

LeCun is careful to couch his statements when discussing the future. “This is still very far from where we want it to be,” he’ll say. “The stuff doesn't work nearly as well as we'd like it to,” he’ll caveat. Indeed, as LeCun cautions, AI is still far from reaching human level intelligence, or General AI as it's known.

Hype cycles can be dangerous for the AI field, as LeCun knows well.

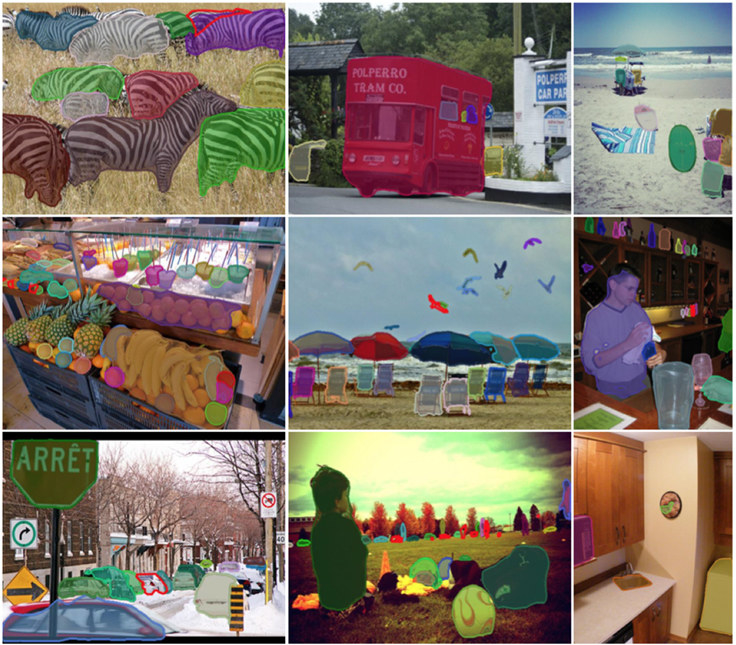

Still, sometimes LeCun can’t restrain his enthusiasm. He’s particularly excited about adversarial training, a relatively new form of AI research that could help solve the prediction and uncertainty challenges facing the field today. Adversarial training pits two AI systems against each other in an attempt to get them to teach themselves about the real world. In one FAIR experiment, for instance, a researcher has one AI system draw pictures in an attempt to trick another into guessing they were drawn by humans. The first uses feedback from the second to learn to draw better.

At a conference earlier this year, LeCun showed something even more advanced: One AI's attempt to convince a second AI that a few frames of a video it created part of a video the second AI had already viewed. Adversarial training, LeCun said, “is the best, coolest idea in machine learning in the last 10 or 20 years.”

And so LeCun will keep playing around with adversarial training, once again pushing the field to its boundaries. He’s come a long way from the man who couldn’t even get his ideas heard 20 years ago. Though LeCun will be the first one to tell you the work is far from over, and that the success is far from his alone, he’s not one to let the moment pass without a small bit of appreciation. “I can't say it feels bad,” he said. “It feels great.”●