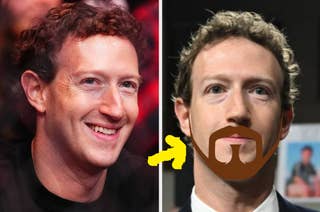

Mark Zuckerberg

People Are Thirsting Over Mark Zuckerberg's "Fake" Beard And His Silver Chain Swagger

I'm calling this new update Mark Zuckerbeard 2.0.

Celebrities Reportedly Sold Their Likenesses To Become AI Personas, And This Looks Like A Bad Sci-Fi Movie To Me

This feels like an episode of Black Mirror.

Mark Zuckerberg Just Dragged Elon Musk Publicly About Stalled Plans For Their Physical Fight, And It's More Embarrassing For Elon Than Even The Original Idea Was

"If Elon ever gets serious...he knows how to reach me."

"I Didn’t Think He Was Going To Tweet It": Grimes Took Credit For When Elon Musk Challenged Mark Zuckerberg To A Penis Measuring Contest

"Watching the father of your children in a physical fight is not the most pleasant feeling."

Elon Musk Has Declared The Fight With Mark Zuckerberg Is Actually Happening, And I'm So Curious Who'll Win

I'm sorry. It's happening, folks.

Mark Zuckerberg's Very, Very Large McDonald's Order Is Going Viral

I guess he can afford it!

Mark Zuckerberg Posted A Picture Of His Ripped Body, And Now Everyone's Freak Flag Is Flying

Oh lord, everyone has lost it.

Elon Musk And Mark Zuckerberg Are Fully Feuding, And It's Clear Who's Winning This One

The billionaires are fighting.

People Are Switching From Twitter To Threads, And These 15 Jokes About It Are So, So, So Good

Are y'all switching to Threads?

Prince Harry Allegedly Pitched A Podcast With Vladimir Putin, Mark Zuckerberg, And Donald Trump As Guests

"I have got to tell the story of the Zoom I had with Harry to try and help him with a podcast idea. It’s one of my best stories."

As If Billionaires Weren't Going Through Enough, Elon Musk And Mark Zuckerberg Have Now Agreed To A Cage Fight

"Send me the location." —Mark Zuckerberg, a man who should have infinitely better things to do

Mark Zuckerberg Posted A Thirst-Trappy Pic, And People Have Reactions

2023 vibes...

Meta Has Cut More Than 11,000 Workers In Its First-Ever Mass Layoffs

Facebook's parent company is letting go of about 13% of its staff, CEO Mark Zuckerberg announced.

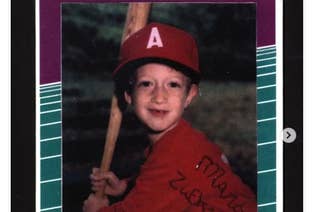

Mark Zuckerberg's Little League Baseball Card Is Now An NFT

An NFT of 8-year-old Zuck’s card could be yours.

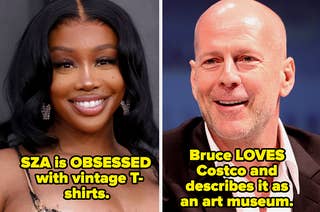

"I Feel So Much Better Buying A Top For $20" – Here Are 22 Celebs Who Love To Shop At Bargain Stores

"Give me a Salvation Army and a Goodwill and I will find stuff in there."

Mark Zuckerberg And Sundar Pichai Allegedly Signed Off On Illegal Facebook–Google Ad Deal

Newly unredacted claims from a 2020 antitrust lawsuit say that Mark Zuckerberg and Sundar Pichai personally approved an allegedly illegal 2018 advertising deal.

Can You Guess The Shared Zodiac Sign Of These Famous Pairs That Have Almost Nothing To Do With Each Other?

It's strange, but these actually make a lot of sense.

Here Are 20 Famous People Who Are Ultra Rich – Do You Think They Deserve Their Net Worth?

Meanwhile, I'm over here sifting through my junk mail looking for good Wendy's coupons.

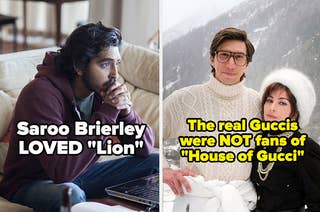

12 Famous People Who Despised The Movies Made About Their Lives, And 12 Who Said "Pass The Popcorn"

The real Guccis didn't exactly react with a standing ovation to House of Gucci.

19 Facts About Billionaires That Are Sure To Make Your Skin Crawl

Billionaires don't even know how much they're worth, and we're definitely underestimating their wealth.

Facebook's Aging Audience Has Sent The Platform Into Panic Mode

“The things that made Facebook what it is have been compounded into ‘Facebook is this place for toxic boomers and therefore younger people don't want anything to do with it.’”

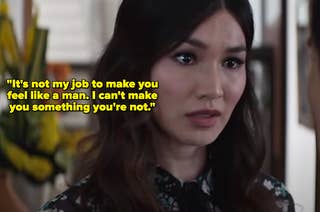

22 Movie Disses That Gave Me Secondhand Embarrassment For The Characters Receiving Them

Put some ice on those burns...

Do NOT Say Mark Zuckerberg Was Riding An Electric Surfboard

“I mean, it is defamation.”

16 Famous People Who Mentored Their Fellow Celebrities And Proved The Value Of Paying It Forward

According to Margaret Cho, Joan Rivers was "everybody’s mom."

Mark Zuckerberg Explains His Really Weird Sunscreen Face

“If someone wants to post a sunscreen meme, it’s cool. I’m happy to give the internet some laughs,” he said.

"Mark Changed The Rules": How Facebook Went Easy On Alex Jones And Other Right-Wing Figures

Facebook’s rules to combat misinformation and hate speech are subject to the whims and political considerations of its CEO and his policy team leader.

As Election Misinformation Spreads On Facebook, Mark Zuckerberg Told Employees That Biden Won

Facebook’s CEO also said Steve Bannon’s comments about beheading government officials did not warrant his complete removal from the platform.

Mark Zuckerberg Said Facebook Will Have Fewer Bans After The Election

In a companywide meeting, Facebook’s CEO said recent content rules banning hate and conspiracy content were implemented because of the US presidential election and that a wide margin of victory for either candidate could prevent violence following the vote.

Mark Zuckerberg Said Apple Has A “Stranglehold” On Your iPhone

At a companywide meeting, Facebook's CEO also said President Donald Trump brought up China during an October dinner at the White House.

Facebook Employees Are Outraged At Mark Zuckerberg's Explanations Of How It Handled The Kenosha Violence

Following days of violence and civil unrest, Facebook employees wonder if their company is doing enough to stifle militia and QAnon groups stoking violence on the social network.