A group of civil society organizations in Myanmar strongly criticized Facebook founder Mark Zuckerberg in an open letter Thursday over his recent defense of Facebook’s approach to hate speech in Myanmar.

Zuckerberg, in an interview with Ezra Klein, editor-at-large of Vox, seemed to give Facebook “systems” credit for finding and stopping the transmission of a pair of messages inciting ethnic violence in Myanmar. In reality, the letter says, those messages were discovered by civil society groups that tried in vain for days to get Facebook to address the problem.

“We believe your system, in this case, was us — and we were far from systematic,” the letter, signed by six NGOs based in Myanmar, says.

In Myanmar, where Facebook is so widely used it is virtually synonymous with the internet, the issue is particularly pressing. The social network has come under fire for failing to do enough to regulate speech inciting violence and targeting minority groups on its platforms.

In a statement on Friday, a Facebook spokesperson apologized for the fact that Zuckerberg did not say that it was the NGOs in Myanmar that brought the messages to the company's attention.

"We should have been faster and are working hard to improve our technology and tools to detect and prevent abusive, hateful or false content," the spokesperson said. "We are rolling out the ability to report content in Messenger, and have added more Burmese language reviewers to handle reports from across all our services."

More than 650,000 Rohingya Muslims were forced to flee to Bangladesh since last summer amid a state-led campaign of violence that the UN has labeled ethnic cleansing. Last month the chair of the UN Independent International Fact-Finding Mission on Myanmar said social media, particularly Facebook, played a “determining role” in that issue, and that the social network had “turned into a beast.”

Facebook’s own community standards ban incitement of violence and hate speech targeting a person’s ethnicity or religion. But in practice, the company depends heavily on users to report hate speech before content moderators can determine whether to take it down. Critics say the process can be clunky and opaque.

Facebook has said it is working to improve content moderation and is hiring more reviewers, including those with local language capabilities.

In the interview, when responding to a question from Klein about anti-Rohingya propaganda on Facebook, Zuckerberg said the company was “paying a lot of attention to” the issue.

He cited an example where Facebook once detected that a set of messages were trying to incite violence between Buddhists and Muslims, targeting both camps.

“I think it is clear that people were trying to use our tools in order to incite real harm,” he said. “Now, in that case, our systems detect that that’s going on. We stop those messages from going through.”

Zuckerberg did not elaborate, so it’s unclear whether the “systems” he was referring to are human or automated. It’s also unclear how the company stops such messages from being transmitted, or what criteria it uses to stop messages from being sent.

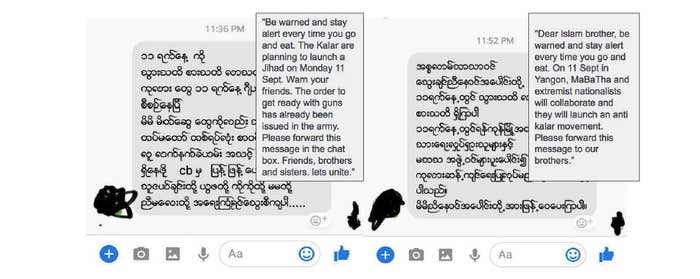

The letter includes a screenshot of two messages that were apparently mass texted to both Buddhists and Muslims, each urging the groups to take up arms against the other. It’s not clear whether these were the exact messages Zuckerberg referred to, but they closely match his description. In Myanmar’s fragile political climate, civil society groups feared they would set off violence.

Because Facebook does not offer a way to report a private message sent via Facebook Messenger, the organizations said it was difficult to figure out how to handle the situation. They ended up reaching the company by sending an email via a contact who happened to know someone at Facebook. By the time Facebook stopped the messages from spreading, they had already circulated for three days and reached thousands of people, the letter says.

“Though these dangerous messages were deliberately pushed to large numbers of people — many people who received them say they did not personally know the sender — your team did not seem to have picked up on the pattern,” the letter says. “For all of your data, it would seem that it was our personal connection with senior members of your team which led to the issue being dealt with.”

Facebook has not briefed the groups on what happened, seven months after messages were taken down, or informed them of what measures would be taken to address such occurrences in the future, the letter says.

Civil society groups have regularly briefed Facebook’s policy team on these issues, the letter adds. But the groups were not able to meet other teams at Facebook, including product and data teams.

The letter urges Facebook to be more transparent about its content moderation process and prioritize working with local groups.