YouTube, where conspiracies about voter fraud and the outcome of the 2020 election have thrived, will begin taking down videos that are uploaded from Wednesday and which contain lies and misleading information about the election.

The new policy will not apply to the thousands of misleading and conspiratorial videos uploaded before Wednesday, some 32 days since Joe Biden was projected as the winner.

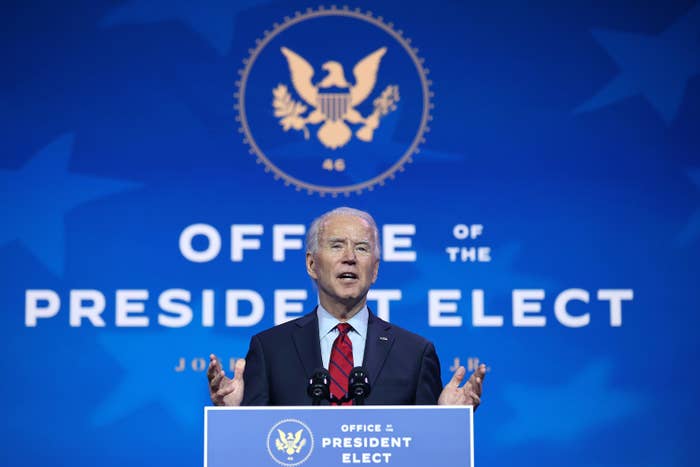

"Yesterday was the safe harbor deadline for the U.S. Presidential election and enough states have certified their election results to determine a President-elect," the company said in a statement. "Given that, we will start removing any piece of content uploaded today (or anytime after) that misleads people by alleging that widespread fraud or errors changed the outcome of the 2020 U.S. Presidential election"

The so-called safe harbor deadline is the date by which state-level election challenges are generally supposed to have been completed.

Spokesperson Ivy Choi confirmed to BuzzFeed News that false election content uploaded prior to Dec. 9 is allowed to remain on the platform.

"Content that is not violative / stays up will show our information panel, which will be updated to reflect the election results certification status," Choi said.

Like other social media platforms, YouTube — whose parent company Alphabet also owns Google — has failed to assertively push back against falsehoods about the election.

On Nov. 4, One America News Network, a pro-Trump, far-right cable channel that Trump himself has personally promoted, uploaded a video claiming that "President Trump won four more years in office last night" as millions of votes in key swing states were being counted.

Despite intense backlash, YouTube declined to remove the video, which has nearly half a million views, and instead slapped a notice on the page first saying that the results are not final, and then days later updated it to note that the Associated Press had projected Biden as the winner.

Conspiracies about the election results have similarly spread on other platforms.

Trump's favorite website, Twitter, has plastered lukewarm warning labels on false and misleading tweets about the election — including from the president himself — but has avoided taking down those tweets altogether.

Facebook, known for its reluctance to reprimand right-wing pages that promote conspiracies and violence on the website, also opted to label misleading posts about the election from Trump. BuzzFeed News reported in mid-November that internal data show those labels have been ineffective in discouraging people from sharing and engaging with those posts.