YouTube on Friday said it would prevent channels that promote anti-vax content from running advertising, saying explicitly that such videos fall under its policy prohibiting the monetization of videos with “dangerous and harmful” content. The move comes after advertisers on YouTube pulled their ads from these videos, following inquiries from BuzzFeed News.

“We have strict policies that govern what videos we allow ads to appear on, and videos that promote anti-vaccination content are a violation of those policies. We enforce these policies vigorously, and if we find a video that violates them, we immediately take action and remove ads,” a YouTube spokesperson said in an email statement to BuzzFeed News.

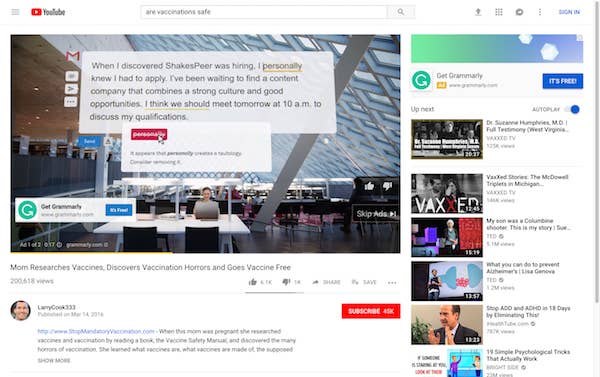

Earlier this week, BuzzFeed News found that while YouTube usually returns a top search result for queries like “are vaccines safe” from an authorized source such as a children’s hospital, its Up Next algorithm frequently suggested follow up recommendations for anti-vaccination videos.

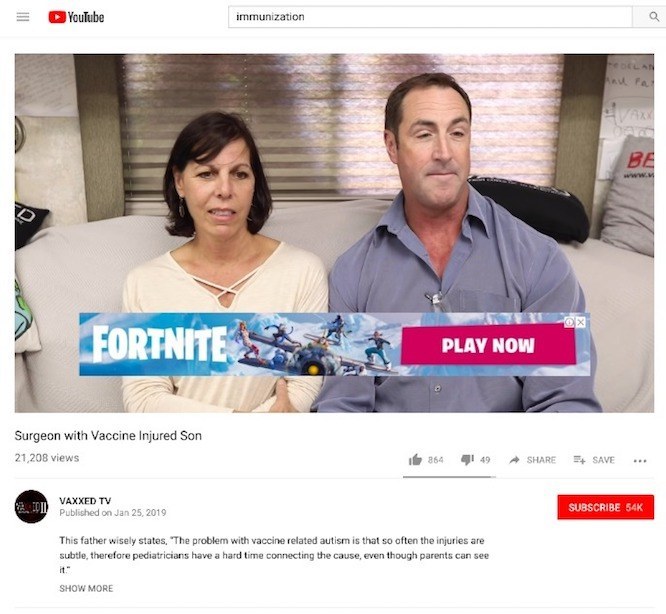

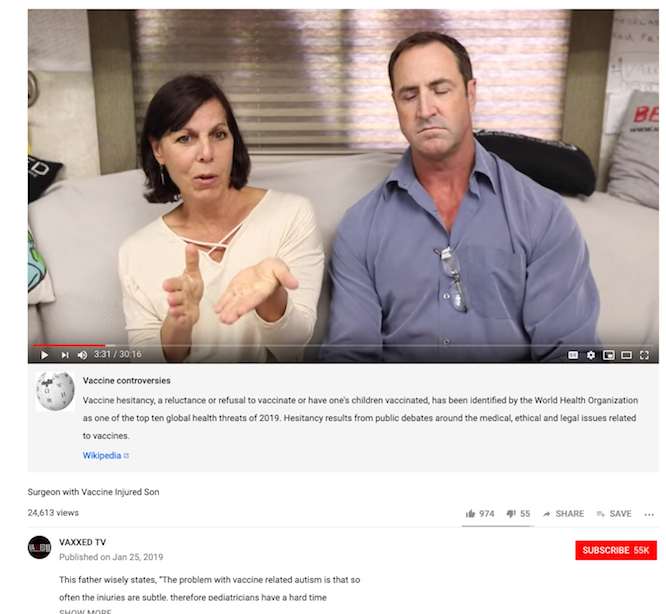

Seven different advertisers said they weren’t aware their ads were appearing on videos like “Mom Researches Vaccines, Discovers Vaccination Horrors and Goes Vaccine Free,” which advocates against vaccinating children, and reached out to YouTube to pull the programmatic placements.

Their ads appeared on videos from channels including VAXXED TV, LarryCook333 (a proponent of StopMandatoryVaccinations.com), and iHealthTube, all of which YouTube has since demonetized, or prevented from running ads.

In addition to demonetizing anti-vax content, YouTube also introduced a new information panel pertaining to vaccines. Previously, information panels appeared on anti-vax videos that explicitly mentioned the measles, mumps, rubella (MMR) vaccine, and only described what the MMR vaccine is for and linked to its Wikipedia page. Now, a considerably larger number of anti-vax videos have an information panel that links to the Wikipedia page for "vaccine hesitancy", where it is described as "one of the top ten global health threats of 2019" according the World Health Organization.

Nomad Health, a health tech company, told BuzzFeed News that it “does not support the anti-vaccination movement,” was “not aware of our ads running alongside anti-vaccination videos,” and would “take action to prevent it from happening in the future.”

A discount vitamin company whose ads appeared on anti-vax videos, Vitacost, said it pulled its ads from YouTube altogether on Tuesday after a YouTube creator revealed that ads were running on sexually exploitative videos of children.

“We pulled all YouTube advertising on Tuesday morning when we noticed content issues. We had strict rules to prevent our ads from serving on sensitive content and they were not effective as promised,” a spokesperson for Vitacost told BuzzFeed News via email.

“We will continue to remain off of the platform until those changes are made and are proven to be effective by other advertisers,” the Vitacost spokesperson said.

The YouTube advertisers contacted by BuzzFeed News about their ads on anti-vax content said they were unaware that their programmatic ads, which are controlled algorithmically, were appearing alongside such videos.

“When we purchase programmatic media, we specify parameters that restrict the placement of our ads from association with certain content. Even so, however, sometimes ads get served in places that we don’t approve of. This is one of those cases,” said a spokesperson for Retail Me Not, a discount drug company. “We’re working to exclude this placement now.”

“Upon learning of this, we immediately contacted YouTube to pull our ads from appearing not only on this channel but also to ensure related content that promulgates conspiracy theories is completely excluded,” said a spokesperson for Grammarly, a writing software company. “We have stringent exclusion filters in place with YouTube that we believed would exclude such channels. We’ve asked YouTube to ensure this does not happen again.”

Want us to report more on more tech’s most powerful companies? Join us as a BuzzFeed News member.

Earlier this week, Grammarly and other advertisers also asked YouTube to pull its ads from videos of young children that were apparently being viewed and commented on by a network of pedophiles. Nestle, AT&T, Hasbro, Kellogg, and Epic Games were among the brands that pulled their ads over the child pornography controversy.

“Any content – including comments – that endangers minors is abhorrent, and we have clear policies prohibiting this on YouTube. We took immediate action by deleting accounts and channels, reporting illegal activity to authorities, and disabling comments on tens of millions of videos that include minors,” YouTube told USA Today on Thursday. “There’s more to be done, and we continue to work to improve and catch abuse more quickly.”

Other companies that asked YouTube to stop their ads from appearing alongside anti-vax content include:

Brilliant Earth, a jewelry company, which said it has “made internal adjustments to our ad settings and will also follow up with our advertising partners to prevent our ads from appearing next to this content.”

CWCBExpo, a marijuana trade show, which said it would be “implementing strict guidelines on content placement and is eliminating hundreds of YouTube channels/videos and negative keywords.”

XTIVIA, which said it was “reviewing the ad placement,” which was “not [its] requested target.”

SolarWinds, a software company, which said the placement was unintentional and that it had “adjusted [its] filters to further refine the targeting of our ads on YouTube to better align with our targeted audience, MSPs and technology professionals.”

Larry Cook, the anti-vax leader behind StopMandatoryVaccinations, said on Facebook that his “entire channel” was demonetized, but that YouTube hadn’t contacted him about the change.

“It is … unfortunate that YouTube does not see the value in advertisers reaching a very large and thriving demographic who believe in alternative medicine, holistic health and natural remedies,” Cook told BuzzFeed News via email. “Shutting down monetization on alternative health channels just means that alternative health advertisers will go elsewhere to reach their intended audience.”

Following a measles outbreak, US Rep. Adam Schiff demanded last week that Facebook and Google, which owns YouTube, address the risks of the spread of medical misinformation about vaccines on their platforms. Facebook responded last week by saying it would take “steps to reduce the distribution of health-related misinformation on Facebook.”

Prior to Schiff’s request, YouTube said it was working on algorithmic changes to reduce the appearance of conspiracy theories in its Up Next recommendations, a category that contains some anti-vax videos, but not all. After BuzzFeed News' report earlier this week, YouTube said, “like many algorithmic changes,” the alterations to its Up Next recommendation system “will be gradual and will get more and more accurate over time.”