Dr. Free Hess, a mother and pediatrician from Gainesville, Florida, recently discovered and reported a trove of content on the YouTube Kids app depicting suicides, school shootings, and violence against female characters.

The near-dozen videos Hess flagged have since been deleted, but she told BuzzFeed News she fears there are many more videos still on the platform that kids are being exposed to.

“I found about 10 [videos] very quickly and very easily, but stopped there simply because I wanted to get the blog post out, not because there weren’t more,” she said. Hess screen-recorded and shared the cartoons she did find on her blog PediMom.com on Friday.

Among the videos she discovered is a cartoon inspired by Minecraft’s graphics called “Monster School: SLENDERMAN HORROR GAME.” In the game, a character finds their victim in school, behind a desk, and shoots them. The shooter is seen laughing after.

The animated series is hosted on an account called TellBite, which has over 167,000 subscribers on its main YouTube channel. Its videos rack up thousands of views.

(Note: This account, and others, are created first on YouTube, which only allows users ages 13 and older to post. The YouTube Kids app claims to take content on YouTube and curate the videos to find those that are kid-friendly, “to make it safer and simpler for kids to explore the world through online video.”)

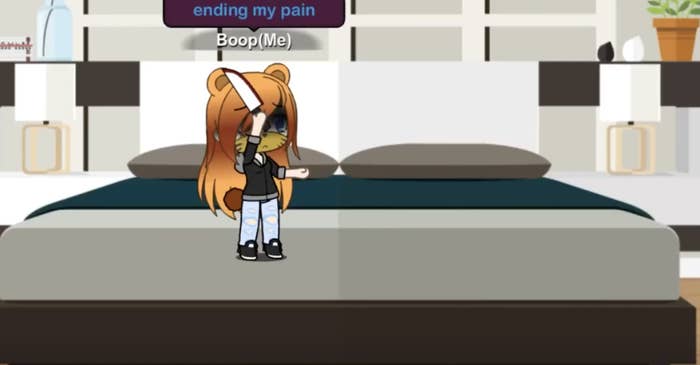

In another clip first posted to YouTube and created by the account Toasty Qween, a young female character is seen attempting to kill herself with a knife before her dad, whose death is shown earlier, intervenes.

“Ending my pain,” her speech bubble says in the clip. The entire video is set to the song “Don’t You Worry Child.”

The original video on YouTube currently has over 1 million views.

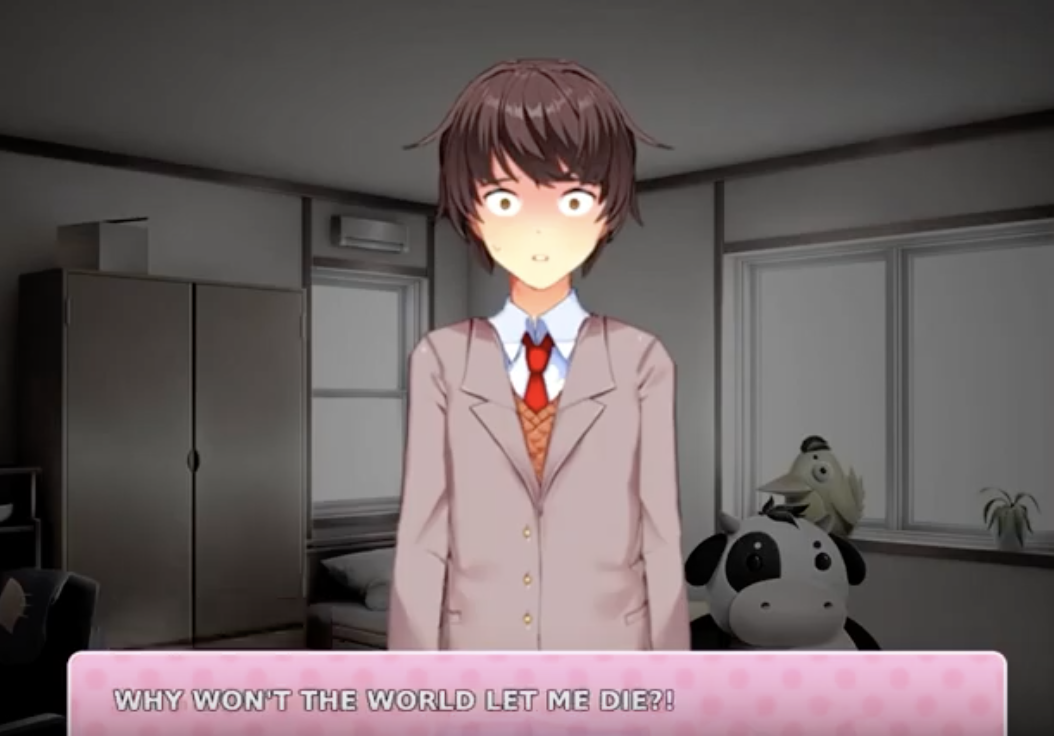

Suicide ideation and attempts are seen in other animated videos found on YouTube Kids, like one titled “Doki! Doki! Rainclouds New End!!!” In this one, a character narrates an attempted suicide. “Why won’t the world let me die?” the character says.

In the clip, a second character is introduced and described as someone who arrived at the suicide scene “just in time” to attempt to talk the main character down.

“Why couldn’t he just let me hang myself?” they respond to the second character’s appearance.

Hess said she’s found several other cartoons on the app that may not have originally been intended for kids, but depict toxic and dangerous relationship dynamics.

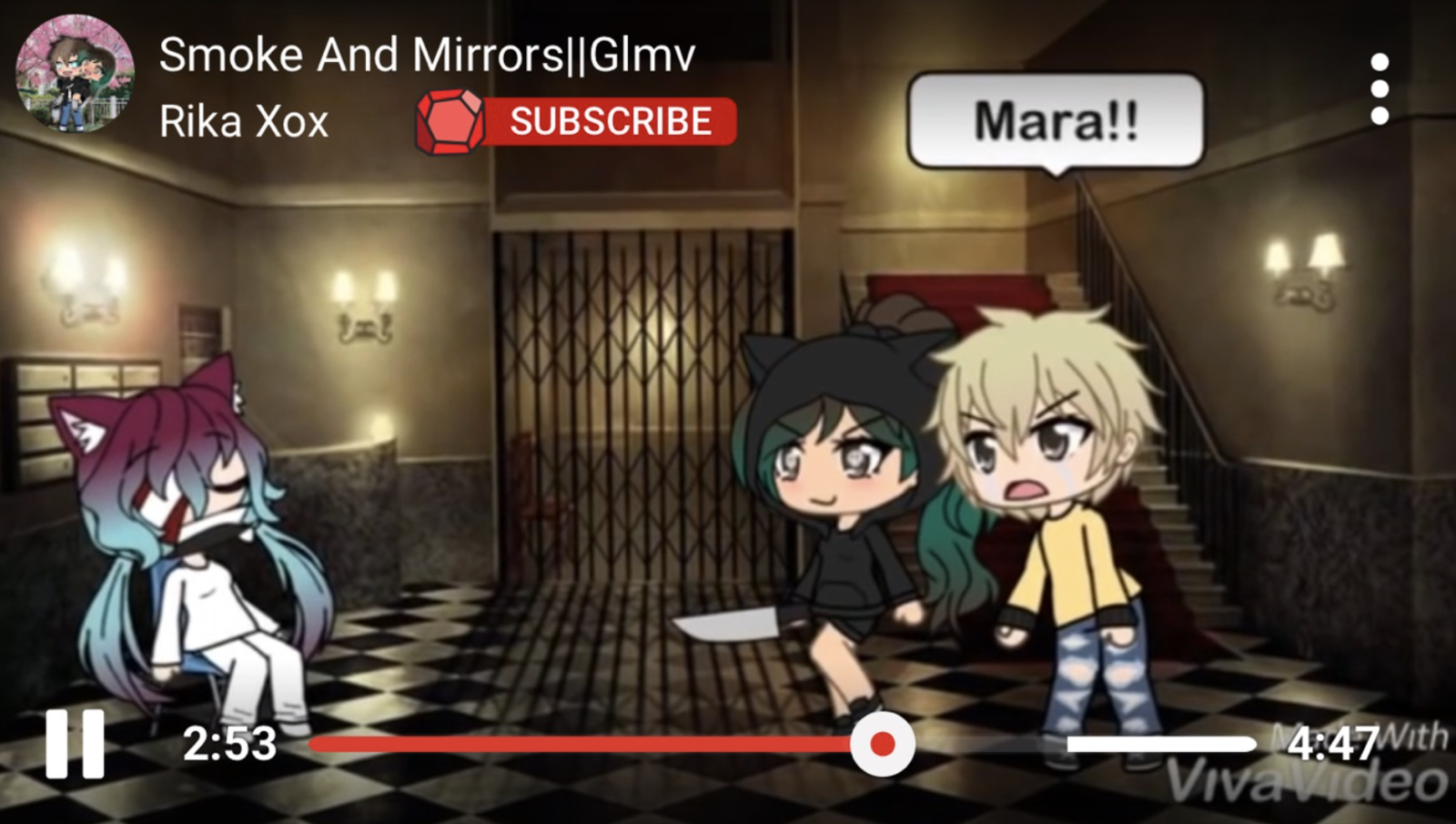

In fact, during her correspondence with BuzzFeed News, she found another cartoon on the app called “Smoke in Mirrors” by the user Rika Xox. In this video, a character “just stabbed a girl in the chair,” she said, while providing screenshots of the scene.

Like the other aforementioned videos, she promptly reported it, and is awaiting YouTube to take action and to take it down.

In a statement to BuzzFeed News, YouTube insists it “take[s] feedback very seriously,” and that it is working to “ensure the videos in YouTube Kids are family-friendly.”

“We appreciate people drawing problematic content to our attention, and make it possible for anyone to flag a video. Flagged videos are manually reviewed 24/7 and any videos that don’t belong in the app are removed,” a spokesperson said.

The company added that while it is “making constant improvements to our systems,” it admits that “there’s more work to do.”

Last week, YouTube confirmed it removed a video of a popular children’s Splatoon-style cartoon. The removal was prompted when parents discovered a troll had reuploaded the cartoon with a spliced-in clip of a former YouTube personality encouraging children to cut themselves.

Hess and other parents had aggressively reported that user and cartoon before it was finally removed. She does not believe YouTube is taking the matter seriously enough or doing enough to censor content before children are able to access it.

“I would like them to recognize the dangers associated with this for our children [and] to be taking parents’ concerns seriously,” she said.

The National Suicide Prevention Lifeline is 1-800-273-8255. Other international suicide helplines can be found at befrienders.org.