Rise Of Robots Means UK Is "Sleepwalking" Into Danger, Says Artificial Intelligence Pioneer

Professor Noel Sharkey says British government's "pathetic" policies are leaving the country at risk.

The British government is "rushing headlong into the robotics revolution" with no heed for the many dangers that the technology brings, says a leading artificial intelligence researcher.

Professor Noel Sharkey, an emeritus professor of artificial intelligence and robotics at Sheffield University, said that British policies on robotics are "pathetic", and that the country is "sleepwalking into it, leaving it until we can't do anything about it, as we have with the internet".

Speaking at the launch of the Foundation for Responsible Robotics (FRR) at the Wellcome Trust in London, he said that the government had invested just £17 million in robotics research, and that "the effects on society aren't mentioned at all", while the ethical implications of robotics garnered only a single mention low down in one policy document.

He said that millions of British jobs were under threat from automation – he pointed to the rise of agricultural robots that are putting farm labourers out of business – and that the rise of robotic technologies in other areas such as policing and health care could have a detrimental impact on human rights. Elder-care robots have cameras to film residents, he said, while autonomous weapons are increasingly used against civilians: He said that in one state in the US, police drones can legally be armed with non-lethal weapons, and that in South Africa a firm has sold 25 drones carrying pepper spray and rubber-bullet guns to a mining company, to stop riots among striking miners.

There is also a concern of terrorism, he said. "The FBI has already expressed concern about self-driving cars. All you'd have to do is load 50 robot cars with explosives and programme in the coordinates for the target, and no one would be able to stop them." Ethical considerations for people's dignity, privacy, and safety need to be programmed in, he said.

The panel also raised the possibility that as robots become more lifelike, they will have a profound impact on social life. "Social robotics has the potential to change who we are," said ProfessorJohanna Seibt of Aarhus University. Humans cannot help but see lifelike robots as alive, as "social agents", she said, so we will form attachments to them. She said that studies have already shown that humans are willing to lie to other humans in order to protect a robot.

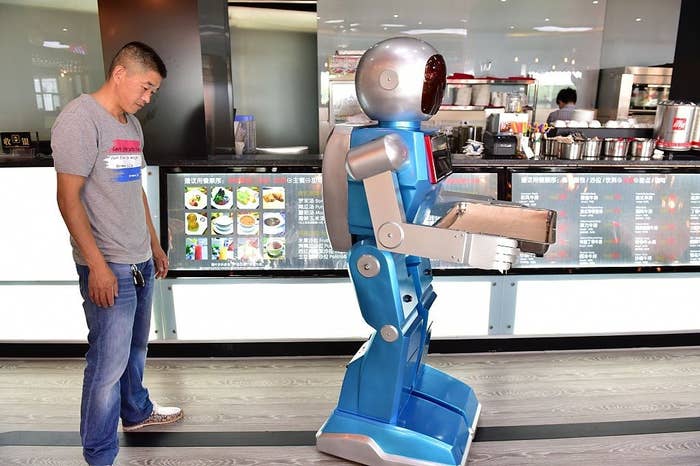

The International Federation of Robotics predicts that there will be 31 million "service robots" worldwide by 2018, up from fewer than 200,000 today, working in every field from agriculture to food preparation to the military. Andy Haldane of the Bank of England has predicted that 15 million British jobs could be replaced by robots and other new technologies. "It's time to think hard about the future of the technology before it sneaks up and bites us," said Sharkey.

He said, "In the 1980s, those of us who were already using the internet would have laughed at you if you'd said that the internet was being used for shopping and child porn." But because policymakers weren't thinking about the growth of the new technology, it became ungovernable, he said. "It's too late to do anything about it now. But we don't want robotics to end up with [the internet's] problems of privacy and surveillance and safety.

"We must strive for responsible and accountable developments in robotics, without stifling innovation."