In a companywide meeting on Thursday, Facebook CEO Mark Zuckerberg said that a militia page advocating for followers to bring weapons to an upcoming protest in Kenosha, Wisconsin, remained on the platform because of “an operational mistake.” The page and an associated event inspired widespread criticism of the company after a 17-year-old suspect allegedly shot and killed two protesters Tuesday night.

The event associated with the Kenosha Guard page, however, was flagged to Facebook at least 455 times after its creation, according to an internal report viewed by BuzzFeed News, and had been cleared by four moderators, all of whom deemed it “non-violating.” The page and event were eventually removed from the platform on Wednesday — several hours after the shooting.

"To put that number into perspective, it made up 66% of all event reports that day."

“To put that number into perspective, it made up 66% of all event reports that day,” one Facebook worker wrote in the internal “Violence and Incitement Working Group” to illustrate the number of complaints the company had received about the event.

BuzzFeed News could not verify the content on the militia page or its associated event because they had been removed from the platform. A previous story from the Verge noted that the page had issued a “call to arms” and hosted a number of commenters advocating for violence in Kenosha following the police shooting of 29-year-old Black man Jacob Blake.

A Facebook spokesperson declined to comment.

The internal report seen by BuzzFeed News reveals the extent to which concerned Facebook users went to warn the company of a group calling for public violence, and how the company failed to act. “The event is highly unusual in retrospect,” reads the report, which notes that the next highest event for the day had been flagged 18 times by users compared to the 455 times of the Kenosha Guard event.

After militia gathered in Kenosha on Tuesday night, a 17-year-old with a rifle allegedly killed two protesters. Facebook has maintained that the suspect, whose Facebook and Instagram profiles were taken down after the incident, had no direct connection with the Kenosha Guard page or event.

Do you work at Facebook or another technology company? We'd love to hear from you. Reach out at ryan.mac@buzzfeed.com or via one of our tip line channels.

During Facebook’s Thursday all-hands meeting, Zuckerberg said that the images from Wisconsin were “painful and really discouraging,” before acknowledging that the company had made a mistake in not taking the Kenosha Guard page and event down sooner. The page had violated Facebook’s new rules introduced last week that labeled militia and QAnon groups as “Dangerous Individuals and Organizations” for their celebrations of violence.

The company did not catch the page despite user reports, Zuckerberg said, because the complaints had been sent to content moderation contractors who were not versed in “how certain militias” operate. “On second review, doing it more sensitively, the team that was responsible for dangerous organizations recognized that this violated the policies and we took it down.”

During the talk, Facebook employees hammered Zuckerberg for continuing to allow the spread of hatred on the platform.

“At what point do we take responsibility for enabling hate filled bile to spread across our services?” wrote one employee. “[A]nti semitism, conspiracy, and white supremacy reeks across our services.”

The internal report seen by BuzzFeed News sheds more light on Facebook’s failure.

“Organizers… advocated for attendees to bring weapons to an event in the event description,” the internal report reads. “There are multiple news articles about our delay in taking down the event.”

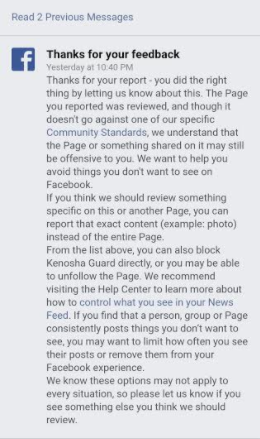

One Facebook user who flagged the Kenosha Guard page “for a credible threat of violence” was told “it doesn’t go against one of our specific Community Standards,” according to a screenshot they sent to BuzzFeed News.

In addition to the four manual reviews that determined the Kenosha Guard page to be non-violative, the Facebook report also noted a number of reviews that “were handled by automation” had reached the same conclusion. As part of a proposed change, the Facebook employee writing the report said that the company should monitor spikes in feedback reports for events and “trigger investigation immediately given this has proved to be a good signal for imminent harm.”

The report seems to acknowledge that Facebook was late to act.

“This post provides more details around what happened and changes we are making to detect and investigate similar events sooner,” the worker wrote. “This is a sobering reminder of the importance of the work we do, especially during this charged period.”