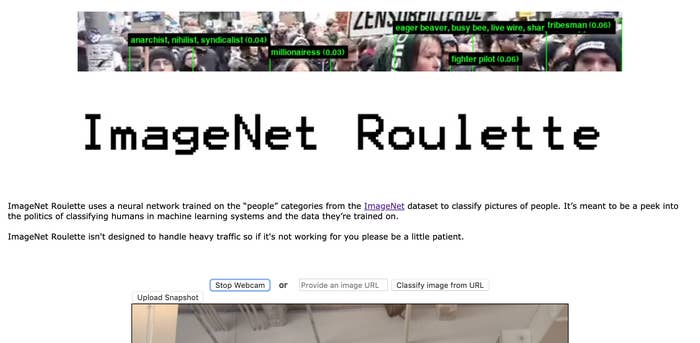

This week, Twitter has been inundated with pictures generated by ImageNet Roulette. The photos are typically of a person's face and feature a green box with a black and green label listing an automatically generated label for the person, based on the software's best guess of what social role they occupy.

ImageNet Roulette is an art project created by Berlin-based developer Leif Ryge, working in collaboration with artist and researcher Trevor Paglen and AI researcher Kate Crawford. It's part of an exhibition called Training Humans, currently showing in the Osservatorio Fondazione Prada in Milan, which explores how datasets and automated systems represent and classify humans.

ImageNet Roulette, a web extension of the exhibition, is designed to demonstrate it. But in practice it sucks, turning out mostly gibberish. Worse, when people of color put their images into it, the app can spit back out shockingly racist and vile labels based on their ethnicities.

Ryge and Paglen's studio has not yet responded to a request for comment.

My face got categorized as “face” #imagenetroulette

Crawford acknowledged in a series of tweets earlier this week how bad AI is at interpreting pictures of humans — and how's that's the point of the project.

"The labels come from WordNet, the images were scraped from search engines. The 'Person' category was rarely used or talked about. But it's strange, fascinating, and often offensive," Crawford wrote. "It reveals the deep problems with classifying humans — be it race, gender, emotions or characteristics. It's politics all the way down, and there's no simple way to 'debias' it."

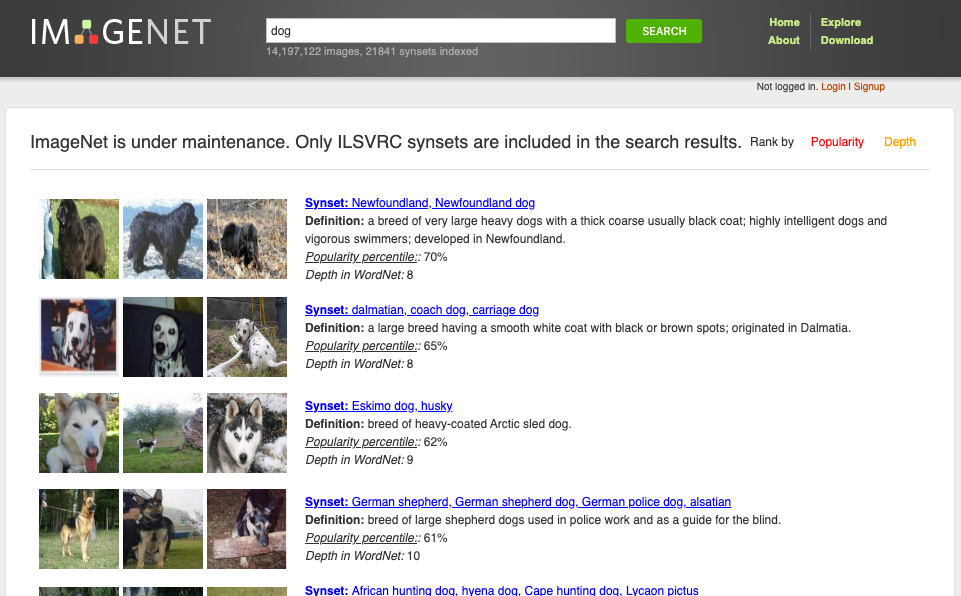

ImageNet, the system on which the app is built, is a research project created at Stanford University and Princeton University. It's an image dataset organized according to WordNet, a database created at Princeton of English nouns, verbs, adjectives, and adverbs. The WordNet database is where the labels come from.

"ImageNet was created in 2009 as — and continues to be — an academic research project to help computers identify generic objects, for example a microwave, zebra, or fountain," a spokesperson for the ImageNet Research Team told BuzzFeed News. "In August 2019, we submitted our new research on improving the ImageNet data to an academic computer science conference for peer review — see our research post here. We are awaiting the results of the review process and we welcome input and suggestions from the research community and beyond on how to build better and fairer datasets for training and evaluating AI systems."

Here's how this all looks in ImageNet.

A search for "dog," brings up various sub-sets like "dalmatian, coach dog, carriage dog" or "dogsled, dog sled, dog sleigh". Click on one of those and you'll be directed to additional subsets and so on.

So when you put your photo into Image[Net] Roulette, it does the same thing. You give it a face and and the system uses ImageNet's database — which uses WordNet's database — to classify you.

uh oh

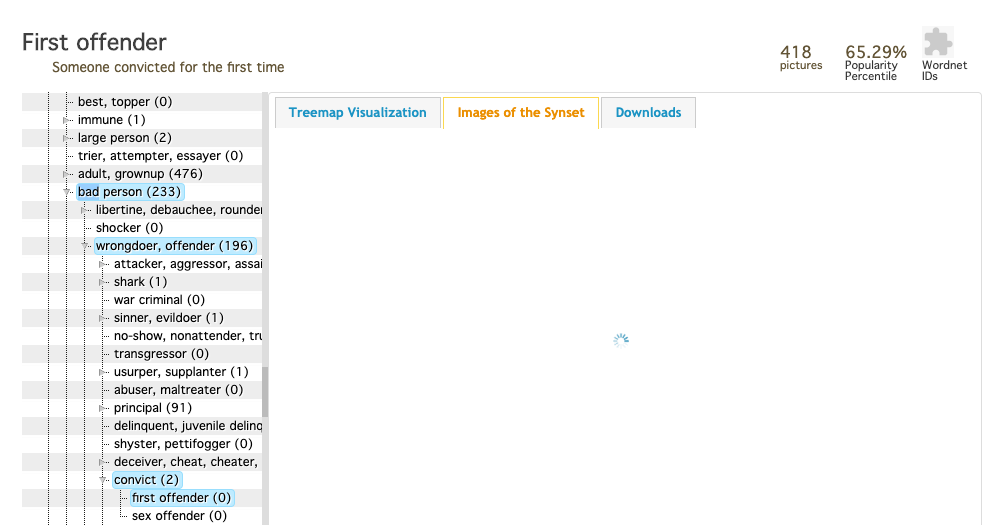

In the example above, ImageNet classified me as a "first offender," which is part of the following hierarchy: "person, individual, someone, somebody, mortal, soul" > "bad person" > "wrongdoer, offender" > "convict" > "first offender."

This is, of course, incorrect. I have never been convicted of a crime.

Here's how that looks inside ImageNet.

WorldNet, the source of these classifications, was started in 1985 with the words in Standard Corpus of Present-Day Edited English. It has since added many more words to its database from various published works, creating a pretty scattershot lexicon, which might explain some of the strange results ImageNet Roulette has been generating. For instance, here's what it generated for my editor Scott. If you're familiar with the phrase "grass widower" — congratulations.

funny, "grass widower, divorced man," is actually entirely accurate https://t.co/nb7rYpVHxT

But ImageNet Roulette has not been been particularly humanizing for people of color, which its creators say is part of the point.

While Scott and I, both white men, were classified in categories other than race, many people of color who submitted images to ImageNet Roulette were classified solely by their ethnicity, sometimes into categories with racist labels.

tfw when you get a press release about an AI photo thing that you've seen lots of other tech reporters having fun with but then it's actually not that fun

Fascinating insight into the classification system and categories used by Stanford and Princeton, in the software that acts as the baseline for most image identification algorithms.

Well, this feels quite literal and accurate.

fascinating tbh

As the makers of ImageNet note, the system regularly classifies people in "dubious and cruel ways." That has real-world implications: WordNet is used in machine translation and crossword puzzle apps. And ImageNet is currently being used by at least one academic research project.

"It’s meant to be a peek into the politics of classifying humans in machine learning systems and the data they’re trained on," Paglen's studio wrote on its website.

"We want to shed light on what happens when technical systems are trained on problematic training data. AI classifications of people are rarely made visible to the people being classified. ImageNet Roulette provides a glimpse into that process — and to show the ways things can go wrong."