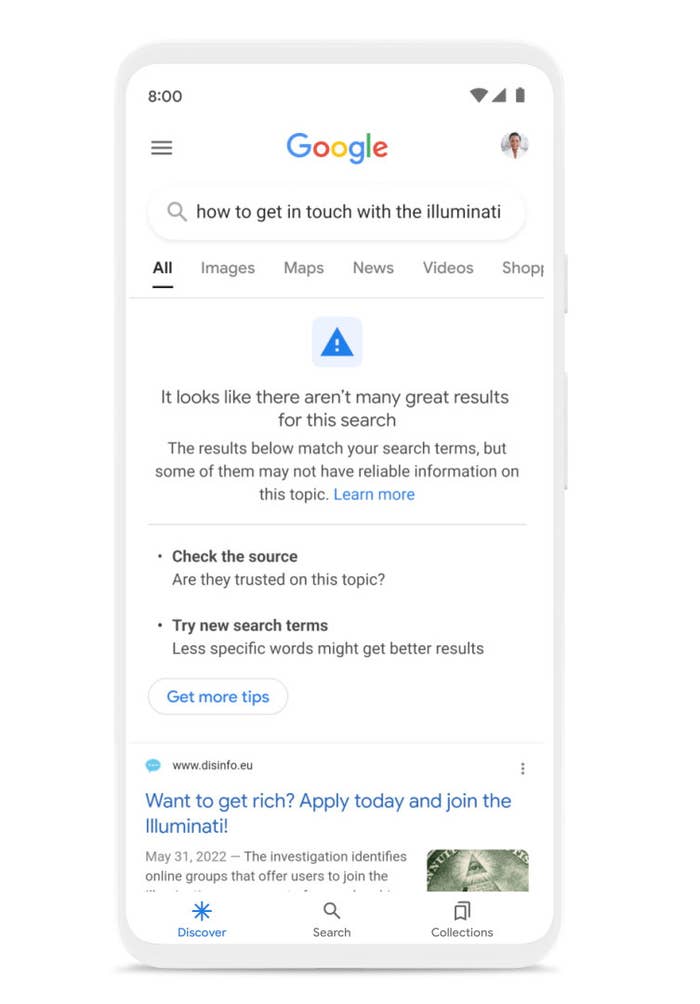

If Google isn’t confident about the overall quality of search results when you search for something, it will now let you know at the top of the results page.

“This doesn’t mean that no helpful information is available, or that a particular result is low-quality,” Pandu Nayak, Google’s vice president of search, said in a blog post that the company published on Thursday. Rather, the warning, which Google calls a “content advisory,” will show up only when the site’s algorithms feel like giving you context about an entire set of results on a page, and despite the notice, you will still be able to see individual search results.

As an example, Google cited a search for getting in touch with the Illuminati, a reference to a popular conspiracy theory that a society of elites secretly controls the world.

The move is a part of broader updates that the world’s most popular search engine is making to improve the quality of information that it surfaces in a world racked by online misinformation and disinformation. Most people now think of Google as the place to find direct answers to questions, rather than links to pages that will hopefully be helpful, and the search engine has had to evolve to deal with this.

“We’re [not] able to know the truth of billions of documents on the web,” Nayak said. “It’s not something that algorithms can detect. And it’s not something that any one person or even a large organization can do. So, instead, what we have developed is a process for understanding signals of quality and reliability of sources on the web.”

Google already shows similar content advisories when a search topic is new or rapidly evolving and there aren’t enough reliable sources that it can show in results yet, and the new advisory will be one more way to signal people to not rely indiscriminately on Google as a source of truth and facts.

In addition to fessing up when it thinks that the quality of a set of search results isn’t great, Google is also making some other incremental changes.

It’s improving the quality of “snippets,” the little nuggets of information that it scrapes from webpages and offers up in bold as answers right at the top of the site when you ask specific questions like, “How long is the Great Wall of China?”

If you now search for a question that has no real answer — as examples, Google cited “What year did Alexander Graham Bell first write ‘Call Me Maybe’?” and “When did Snoopy assassinate Abraham Lincoln?”, although who exactly is searching for this is still unclear — Google will refrain from providing a snippet with information that’s only partially correct (previously, it showed Lincoln’s assassination date, and ignored the fact that a comic strip beagle did not, in fact, kill the former president). Google said it has reduced when snippets are triggered in instances like these by 40%, although a company spokesperson declined to share the total number of search queries that triggered them in the first place.

These improvements to snippets will also show up in the “People Also Ask” section on the search results page, Nayak said.

A final new feature that’s coming this week to people who use the Google app on iPhones and iPads, for whatever reason, instead of a browser: If you need more context about a website you’re on, such as its reviews, how widely it is cited as a source, who owns it, and more, you can swipe up from the bottom navigation bar to find out. The feature will come to Android — Google’s own operating system for phones and tablets — later this year.

Still, none of these improvements will apply to YouTube, the other big Google-owned platform where misinformation sometimes runs rampant. “Their problem is a little bit different than ours,” Nayak said, because, unlike Google Search, YouTube actually hosts videos and serves them up algorithmically. “We don’t share code bases directly. We don’t work on YouTube directly and YouTube doesn’t work on us directly.”