Readers, hello!

We’re back and we’ve revamped the newsletter this year. Craig and I will alternate between either bringing you an in-depth look at a topic or highlighting other work worth reading. Send us feedback and tips: fakenewsletter@buzzfeed.com. Ok, let’s get into it.

After 18 months of probing, the UK House of Commons Digital, Culture, Media and Sport Committee released its final report on disinformation at midnight on Monday. The report amounts to a 100-page condemnation of Facebook, calling the company “digital gangsters.” Here are three things that stood out to me:

The report calls for algorithm transparency. “Just as information about the tech companies themselves needs to be more transparent, so does information about their algorithms,” the report says. Algorithms decide what’s put in front of our eyes across the web in news feeds, search results, and video platforms, so it makes sense for us to know how those decisions are made. This isn’t a new idea. Over at MIT researchers came up with the concept of an AI nutrition label.

Inferred data needs to be protected. Inferred data is information about someone that wasn’t directly provided to the social network. It’s information obtained through analysis of other data they collect about you. Another name for it is a “shadow profile.” That data is what allows for advertisements to be targeted at people who might be interested in a business or, say, a political message. The data clearly has value to tech giants — the report says even when a user deactivates their Facebook profile the company keeps data from that profile and even data on their friends.

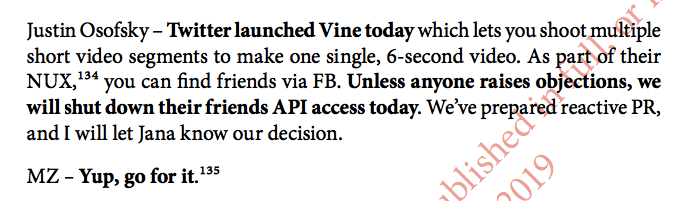

Facebook contributed to Vine’s death. It’s well-known that Facebook targets its competitors, but Vine’s story is the perfect illustration of the power Facebook’s data has over other tech companies. Facebook revoked Vine’s access to users’ friends list, cutting off the video app’s ability to grow its audience. Lack of audience growth was one reason Vine cited for shutting down. Here’s a remarkable exchange between Mark Zuckerberg and Facebook VP of global operations and media partnerships Justin Osofsky:

Why is any of this important? The Committee brought in representatives from nine other countries to the hearings (Argentina, Belgium, Brazil, Canada, France, Ireland, Latvia, Singapore and the UK), so it’s possible that hearing participants will adopt similar policies and amplify their impact.

— Jane

From our team:

- Twitter Suspended A DC Think Tank For Violating Its Rules Against Fake Accounts

- How An Apocalyptic Preacher And QAnon Followers Made A False Pope Francis Quote Go Viral

- Scammers Are Tricking People Into Buying Puppies That Don't Exist

- People Are Renting Out Their Facebook Accounts In Exchange For Cash And Free Laptops

Craig’s recommended reads:

Fake faces, fake stories: There’s a website to test whether you can tell the difference between a face generated by artificial intelligence and one of an actual person. Try it for yourself. Another harbinger of the synthetic times ahead is this Bloomberg story about an AI research group that developed software “that can produce authentic-looking fake news articles after being given just a few pieces of information.”

Google boasts about how it fights disinformation: The search and ad giant released a detailed overview of all the ways it is supposedly battling online disinformation. Please don’t read it without also checking out this quick analysis of it from researcher Aviv Ovadya.

How YouTubers get pushed towards conspiracy content: Vice’s Motherboard has a great look at how the incentives of YouTube drive some of its prominent creators to veer into conspiratorial content. (We showed how the same dynamic is also at play with the platform’s “super chats” feature.)